Thomas CopelandBBC Verify

BBC

BBC

An unprecedented wave of AI-generated misinformation about the US-Israel war with Iran is being monetised by online creators with growing access to generative AI technology, experts have told BBC Verify.

Our analysis has found numerous examples of AI-generated videos and fabricated satellite imagery being used to make false and misleading claims about the conflict which have collectively amassed hundreds of millions of views online.

"The scale is truly alarming and this war has made it impossible to ignore now," says Timothy Graham, a digital media expert at the Queensland University of Technology.

"What used to require professional video production can now be done in minutes with AI tools. The barrier to creating convincing synthetic conflict footage has essentially collapsed," he says.

The US and Israel began launching strikes on Iran on 28 February. In response, Iran has launched drone and missile attacks on Israel, as well as multiple Gulf nations and US military assets in the region.

Many have turned to social media to search for and share the latest information and to help make sense of a fast-moving week of conflict.

The platform X announced this week it will temporarily suspend creators from its monetisation programme if they post AI-generated videos of armed conflict without a label.

The scheme rewards eligible users whose posts create large numbers of views, likes, shares and comments with payments from the platform.

"It's a notable signal that they've noticed that this is a big problem," says Mahsa Alimardani, a researcher specialising in Iran at the Oxford Internet Institute.

We asked TikTok and Meta, the company of Facebook and Instagram, if they intend to take similar action, but they did not respond to our requests for comment.

A typical example of an AI-generated video that BBC Verify has tracked appears to show missiles striking the city of Tel Aviv in Israel as the sound of explosions rings out in the background.

This video has been featured in more than 300 posts which have then been shared tens of thousands of times across social media platforms.

Some X users turned to the platform's AI chatbot Grok to confirm the video's veracity. But in many cases seen by BBC Verify, Grok wrongly insisted that the AI-generated video was real.

Another fake video, viewed tens of millions of times, claims to show Dubai's Burj Khalifa skyscraper in flames, while a crowd of people seem to be running towards the building.

This AI-generated footage spread widely online at a time of considerable concern from residents and tourists about the drone and missile strikes on the city.

"Fake videos like these have a detrimental impact on people's trust in the verified information they see online and make it much harder to document real evidence," says Alimardani.

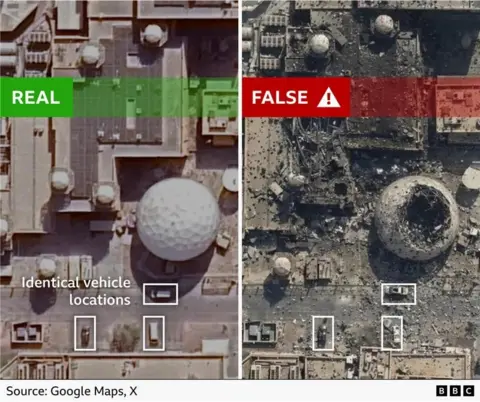

A new feature of this conflict analysed by BBC Verify is the emergence of AI-generated satellite imagery.

We verified multiple real videos showing Iranian drone and missile strikes on the US Navy's Fifth Fleet headquarters in Bahrain on the first day of the conflict.

A fabricated photo, shared on X by the state-linked newspaper The Tehran Times, began to spread the following day and claimed to show extensive damage to the base.

The fake appears to be based on real satellite imagery of a US naval base in Bahrain taken in February 2025, which is publicly available online.

According to Google's SynthID watermark detector, the fake image was generated or edited with a Google AI tool.

Three vehicles parked outside are also in the exact same spot in both the genuine satellite imagery and the AI picture - despite the photos allegedly having been taken a year apart.

Google's AI tools, including its video generator Veo, are on the growing list of popular AI platforms, like OpenAI's Sora model, Chinese AI app Seedance, and Grok which is built into X.

"The number of different tools that are now available to create a wide range of highly realistic AI manipulations is unprecedented," says Henry Ajder, a generative AI expert.

"We have never seen these tools so available, so easy and so cheap to use," he says.

This has led to a surge of AI-generated content online "because the pipeline onto social media can now be almost fully automated," says Victoire Rio, executive director of the technology policy non-profit What To Fix.

This fake image of a huge explosion at a US base in Iraq has been manipulated using AI based on a real picture showing a much smaller cloud of smoke

X's head of product said on Tuesday that "99%" of the accounts spreading AI-generated videos like these were trying to "game monetization" by posting content that will generate large amounts of engagement in return for payment through the app's Creator Revenue Sharing programme.

The platform does not publish how many accounts are part of the programme, or how much money they can make.

But Graham estimates that X could pay about "eight to 12 dollars per million verified user impressions".

"Creators have to hit five million organic impressions in three months, plus hold an X premium subscription, to be eligible," he added.

"Once you're in, viral AI-generated content is basically a money printer," he says. "They've built the ultimate misinformation enterprise."

X did not respond to our request for comment or our questions about the Creator Revenue Sharing programme.

Experts have told BBC Verify that while many social media companies say they are trying to change their moderation and detection systems to address the scale and speed at which AI-generated content spreads, there is no simple solution to the problem.

"The deeper issue is that engagement-driven monetisation and accurate information are fundamentally in tension, and no platform has fully resolved that tension or perhaps ever will," says Graham.

.png)

1 month ago

32

1 month ago

32